RAID (redundant array of independent disks) is a technology that allows combining multiple disk drives into arrays or RAID volumes by spreading (striping) data across drives. RAID can be used to improve performance by taking advantage of several drives’ worth of throughput to access the dataset. It can also be used to increase data reliability and availability by adding parity to the dataset and/or mirroring one set of drives onto another. This helps prevent a loss of data or application downtime in case of a drive failure.

RAID technology has been around for decades and is well-known when applied to traditional configurations with mid-capacity HDDs. However, the storage industry is evolving fast, and new storage technologies carry a lot of challenges for RAID in 2024.

As HDDs are getting bigger every year, the reliability of parity RAID goes down. It happens due to the longer time it takes to rebuild data in case of a drive failure. Today’s 20+ TB drives may take weeks to re-construct and the probability of losing another drive in a group during this stressful time is increasing.

Another disruption to the status quo is NVMe. Bringing huge increases in throughput, these drives require a lot of computational power to calculate parity information and to rebuild in case of a drive failure. This is one of the reasons why RAID is not straightforward with NVMe.

Yet another level of complexity appears when NVMe is connected via network. Transient network issues are hard to distinguish from drive IO errors, so the developers must take care to adapt RAID logic to the networked model.

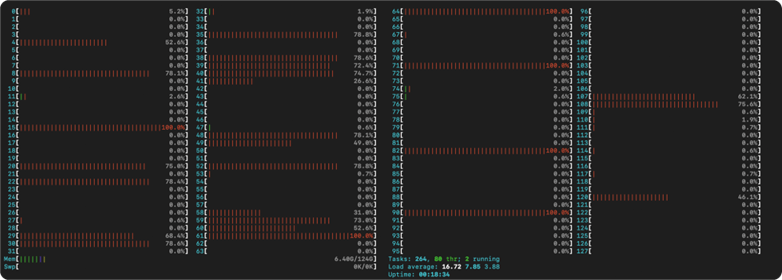

NVMe RAID rebuild from the CPU perspective

Figure above shows what happens on the server when an NVMe RAID is rebuilding – even an immensely powerful 128-core CPU has many of its cores loaded to 100% with parity re-calculations.

Hardware RAID controllers

Several manufacturers offer hardware products that implement RAID. The implementation differs significantly based on the supported protocol(s). SATA drives have the lowest performance, latency and scaling requirements, making integration of hardware RAID logic easier. Most modern compute platforms have support for SATA RAID integrated into the south bridge of the motherboard with management integrated into the BIOS.

Hardware RAID is most strongly associated with SAS, as historically RAID was used in datacenters, where SCSI was pervasive (we’re intentionally skipping network-attached storage now, to be covered at the end of the post). For SAS, hardware RAID is usually the most natural solution with some notable exceptions. There are several features that make HW RAID good for SAS:

- SAS drives require a host-bus adapter. Unlike SATA, connectivity for SAS drives is not integrated into the chipset. Even those servers that have onboard SAS, rely on a discrete SAS chip. So, if you’re paying for connectivity anyway, it makes sense to offload RAID calculations there as well.

- External ports. SAS adapters come in assorted flavors, but most models are available with either internal ports to connect to the server backplane, external ports to connect to a SAS JBOD(s), or both. This gives a lot of flexibility and adds scale to the setup. Literally hundreds of SAS drives can be connected to a single adapter and used as part of RAID volumes.

- Write cache. Most drives have a part of on-board RAM allocated to serve as write-back cache. Using RAM to buffer writes notably increases drive performance at the cost of reliability. In case of an emergency power-off (EPO) event, the contents of the volatile RAM cache are lost, which leads to potential data corruption or loss (there are new developments in this field, making use of NAND flash on the HDD to dump cache contents, but this is only relevant for the newest large-capacity HDDs). Due to the risk of data loss, in most environments write-back caching is disabled on the HDDs. HW RAID adapters can add write-back caching without the risk to data. Like the HDDs, a portion of on-board RAM can be allocated to serve as a buffer to increase write performance, and a battery back-up unit (BBU) can be added to the adapter to protect cached data in case of an EPO event.

- Compatibility. HW RAID is largely plug-and-play. All configuration is stored on the card and drive management is available from the adapter BIOS, before booting into host OS. It means that the host sees RAID volumes as physical drives, which reduces OS management overhead and makes HW RAID compatible with almost all operating systems.

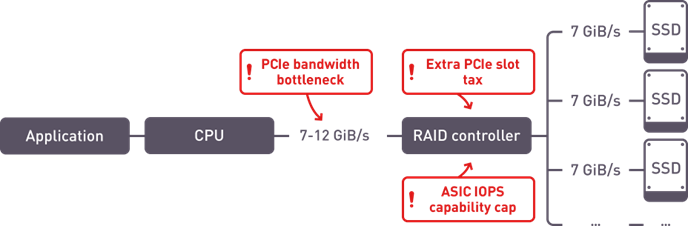

For NVMe currently there’s limited availability of HW RAID options. Out of the 3 major HW RAID manufacturers, only one has come up with NVMe RAID implementation. While it has some of the familiar useful features of SAS RAID cards, it largely struggles with the performance of several NVMe drives and has a potential for being a bottleneck.

Problem points in HW RAID implementation for NVMe

- ASIC IOPS capability. Even the newest RAID chips have a cap on the amount of IOPS they can process. With modern PCIe Gen.4 NVMe SSDs pushing above 1M IOPs per drive, even 3-4 drives can saturate a RAID adapter.

- PCIe bandwidth bottleneck. With RAID adapter sitting on the PCIe bus between the drives and the CPU, the system performance is limited to the bandwidth of the single PCIe slot.

- Latency. One of the key factors of the success of NVMe is low latency. This comes from the fact that the drives attach directly to the PCIe bus without any intermediate devices between the SSDs and the CPU. This helps ensure the lowest possible latency. Extra hardware between the drives and the CPU adds latency that negates one of the key advantages of NVMe.

Software RAID

Another way of adding RAID benefits to the host is by using software RAID or volume managers. Such products can improve flexibility of storage allocation on a server, adding data reliability and performance features. There is a multitude of products to choose from, with most of them being OS-specific. This is one of the weaker sides of SW RAID, since data migration between different operating systems is made more difficult and requires an additional set of skills from the storage administrator.

SW RAID implementations range from simpler abstraction products like Linux kernel mdraid, to larger and more feature-rich volume manager products like Veritas, LVM or Storage Spaces, to components of file system like ZFS or btrfs. But even with this level of diversity, there are some key commonalities.

- SW RAID uses host resources – CPU and RAM – to provide data services.

- OS-specific, with some exceptions like Veritas, most SW RAID packages are designed for a limited number of operating systems.

- Flexible and feature rich. SW RAID is usually abstracted from the underlying hardware and can use several types of storage devices, sometimes in the same RAID group.

The main strength of software RAID is flexibility. If you can see your original storage device as a disk drive in your OS, SW RAID can work with it. The same software can be used to work with local devices over all protocols (SATA, SAS, NVMe, USB even), network-attached block storage like FC or iSCSI, or the new NVMeoF targets.

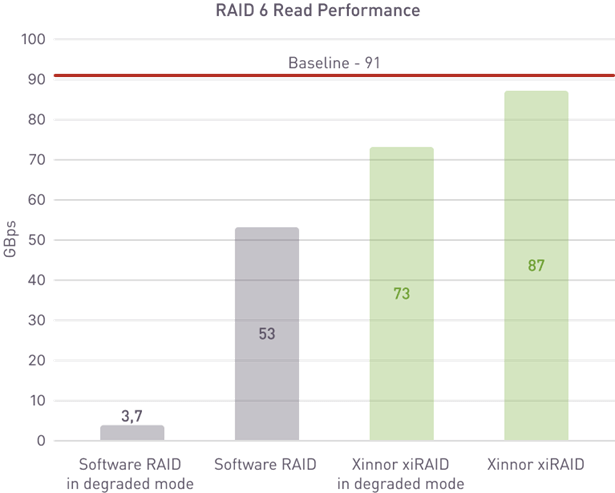

Host resource consumption and performance are generally the issue with software RAID. In terms of performance, most software products work very well with HDDs (reconstruction still takes a lot of time with large drives, and in cases where it’s critical, more advanced technologies like Declustered RAID need to be considered), begin to struggle with SSDs and drastically underperform with NVMe. This is mainly because their code base was being developed for HDD levels of performance and it doesn’t provision for the high levels of parallelism, huge number of IO operations and throughput that NVMe delivers. A notable exception here is Xinnor xiRAID that was designed from the ground up to be used with NVMe and other SSDs.

Performance comparison between a popular SW RAID package and xiRAID

When it comes to host resource consumption, again, most SW RAID products can handle HDD levels of throughput and simple RAID logic like RAID1 or RAID10 without any significant impact on the host. When dealing with hundreds of thousands or millions of IOPs and tens of gigabytes per second of throughput, software RAID starts using significant amounts of host resources, possibly starving business applications. xiRAID relies on a relatively rarely used feature of modern x86 CPUs called AVX (advanced vector instructions) and has a lockless architecture that helps spread computation evenly across CPU cores. This is a highly effective approach that needed years of research, but it allows to compute RAID parity on the fly for tens of millions of IOPS with negligible effect on the host resources. It also enables fast reconstruction of RAID in case of a drive failure, minimizing the window of increased risk of data loss and degraded performance.

Pros and cons

Hardware RAID

Pros:

- Cross-OS

- Write cache with battery backup

- Easy to manage and migrate between servers

- Provides physical connectivity

- Doesn’t consume host resources

- Reliable performance for HDDs and most SATA/SAS SSD configurations

Cons:

- Requires physical purchase and free expansion slot(s) on the server

- Added latency

- Strict compatibility matrices for supported drives, servers, JBODs, cables

- Firmware has limited space, so don’t expect new features with software updates

- Simple feature sets

- Can’t work with networked devices

- Bottleneck for NVMe

Software RAID

Pros:

- Hardware-agnostic, can combine drives of varied sizes and interfaces

- Many free options

- Flexible and feature rich

- Works with networked storage

- Easy to include new features in software updates

Cons:

- OS-specific

- Uses host resources – CPU and RAM (solved in xiRAID)

- Low performance with NVMe (solved in xiRAID)

- No write cache or physical ports

Which RAID is better in 2024?

As usual, it depends on the use case. For most SATA installations an onboard RAID controller or a volume manager that’s shipping with the OS should do the trick. For SAS, the most natural choice would be to use hardware RAID adapters. In case of large installations with high-capacity drives consider using advanced commercial software RAID products and/or declusterization. For NVMe/NVMeoF under Linux xiRAID would be the best performing and flexible solution. For Windows and other operating systems hardware RAID or software mirroring are likely the best options today but stay tuned for development news from us and possible new DPU-based products.