We use cookies to personalize your site experience.

Privacy policyTechnology Partners

About

The SC4200 (Athena G2) represents a cutting-edge 2U rackmount NVMe platform, boasting 24 PCIe NVMe dual-port SSDs and redundant computing nodes fueled by Intel® Xeon® Scalable processors.

With the combined might of dual Intel® Xeon® Scalable Processors, this system guarantees outstanding performance even under peak loads. Its all-flash architecture ensures swift responsiveness, tailored to a wide array of business applications and environments. Redundant access to all hot-swappable NVMe modules and power supplies, alongside optional battery backup units, works to maximize uptime, fortifying system reliability and data integrity, even in challenging conditions.

Challenge

The rise of Artificial Intelligence necessitates rapid storage to fully engage the CPU. Deep learning models, the backbone of AI, consume vast amounts of data during training, tirelessly analyzing extensive datasets to enhance their performance. GPUs, the specialized processors fueling many AI operations, excel at processing this data, yet their efficiency diminishes when data delivery lags. Given the high cost of GPUs, maximizing their utilization is paramount. Slow storage creates bottlenecks, resulting in idle periods where GPUs wait for data instead of processing it. Faster storage solutions facilitate a seamless flow of information, ensuring GPUs remain consistently active and productive. Every moment a GPU spends idle waiting for data extends training times, impacting project timelines and increasing resource expenditures. Addressing this critical need in AI workloads, Xinnor has introduced xiSTORE.

Solution

In addressing the challenge posed by the demand for rapid storage in Artificial Intelligence workloads, the integration of xiSTORE with the SC4200 (Athena G2) platform offers a comprehensive solution. xiSTORE, a Software Defined Storage (SDS) solution tailored for HPC and AI markets, combines the robustness of xiRAID, the fastest RAID engine, with Lustre FS clustered file system and commodity hardware. This amalgamation results in an efficient, flexible, and scalable storage infrastructure, perfectly suited for the demands of AI tasks.

The integration of xiSTORE with the SC4200 (Athena G2) platform ensures a seamless flow of data, maximizing the utilization of GPU resources and minimizing idle time. This collaboration results in accelerated training times, streamlined development schedules, and optimized resource costs, effectively addressing the challenges posed by the need for fast storage in AI environments.

Configuration

- CPU: 2x Intel(R) Xeon(R) Gold 6336Y CPU @ 2.40GHz;

- Memory: 32 GB x 16 = 512 GB, Samsung M393A4K40DB3-CWE;

- Drives: 22x Kioxia CM6-R KCM61RUL3T84 dual port NVMe;

- RAID Configuration: 20 NVMe drives used for 4 RAID 6 (8d+2p) (2 namespaces per NVMe to split each NVMe to 2 block devices, to avoid PCIe x2 limits), 2 NVMe drives for RAID 0;

- Lustre configuration: 4 Virtual machines each with 1 OSS/ 1 OST (MDS with 2 NVMe RAID 0 is located on one of the virtual machines);

- OS: Rocky 8.7, Lustre 2.15.2;

-

20 Lustre clients:

- Oracle linux 8.8;

- Lustre 2.15.3.

- Performance benchmarking tool: IO

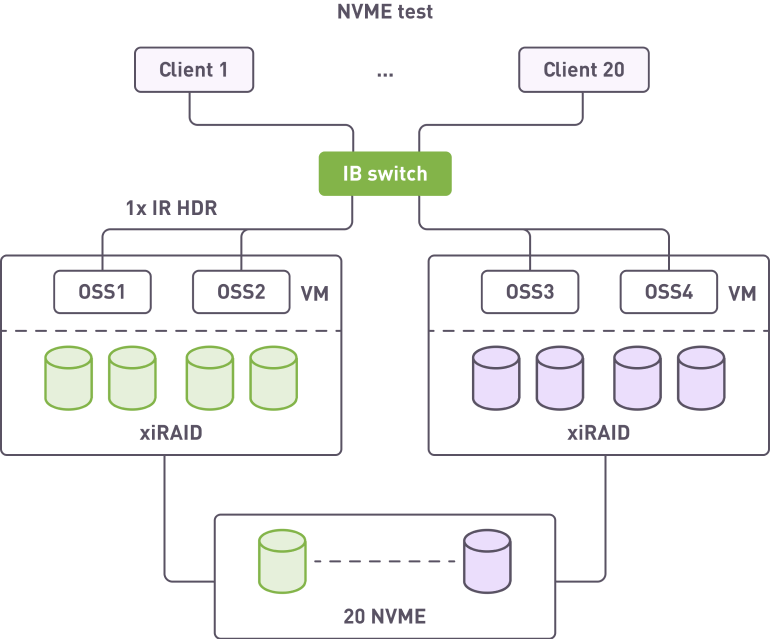

Test configuration setup is presented on the picture:

IOR Test parameters are:

Conclusion

After testing the following results were achieved:

Random Read: over 3M IOPS

Sequential Read: over 82 GBps

Sequential Write: over 63 GBps

Extensive testing has showcased the impressive performance capabilities of the integrated solution featuring xiSTORE and Celestica’s SC4200 platform. The symbiosis between SC4200's strong configuration and xiSTORE's cutting-edge functionality not only yields exceptional performance but also ensures unparalleled reliability. The performance demonstrated, coupled with the architecture's absence of single points of failure, presents an ideal solution for delivering rapid storage to execute intricate AI models and maximize GPU utilization.

Download the solution brief to learn more about test setup and results: https://xinnor.io/files/